Dice

Background

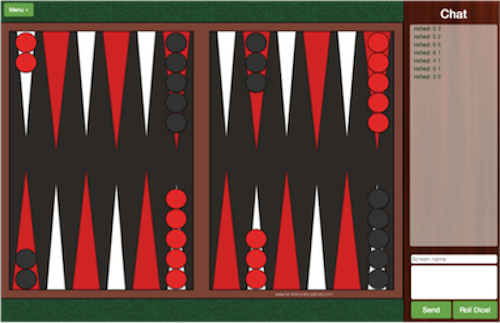

Last week I had the pleasure of working with @j_scag_, @jamesjtong, @ivanbrennan, and @ahimmelstoss on this amazing gametable application. This rails based gaming platform lets users play chess, checkers, backgammon and chinese-checkers online with friends far and wide.

The game play is made possible by the Sync gem Pusher. Sync and Pusher allow for realtime refreshing and updating of partials on a given game page so that every time a user moves a game piece or enters text into the chat, those movements/chats can be seen by other players of the game on different computers.

For the backgammon game (and future games that require dice), we needed to implement a way for players to roll dice. We implemented this functionality using the existing chat functionality that had already been built.

Chat

The chat function routed messages to the following action on the MessagesController:

1 2 3 4 5 6 7 8 | |

This action creates a new message with the desired contents and then syncs those contents across the different users of the game. When the message box partial is refreshed, the new message that was created by the action is now included among the messages for that game that get displayed in the message box to the right of the game board.

1 2 3 4 5 6 7 8 | |

Dice

Similarly, the dice functionality routed to the dice action on the MessagesController:

1 2 3 4 5 6 7 8 | |

This action is almost indentical to the create action save for two importance differences. First, the content is set to two randomly genereated numbers between 1 and 6 (#{rand(6)+1} #{rand(6)+1}). Second, the sources is taged as computer, instead of user :source => "computer" .

Authentication

Given that our platform had no user authentication, we needed a way to make sure that users were actually rolling the dice instead of just changing their chat name and entering the dice rolls by hand. To accomplish this, we put some logic in the chat message partial that colored the text green when the source was the computer (i.e., dice roll) and left the text uncolored when the source was one of the users on the system. The logic in the partial for this is as follows:

1 2 3 4 | |

Somthing you may notice here is the strange line breaks within the opening <li> tag. This was necessary because when the erb code logic that checked for the source was all on one line it did not render appropriately.

Final thoughts

I really like how this dice functionality fits in with the existing messaging framework built into the application. It fits in with other simple but elegent solutions in this app like using secure game room codes http://gametable.co/games/56b3f2d83c82304ce036cec5c97435d7instead of users. The next step is to add some new games that need dice.